What are your statistical principles?

#1: Data can be singular or plural, depending on your mood

The well-known statistical methodologist Andrew Gelman recently posed an interesting question on his blog: what are your statistical principles? [Andrew Gelman]. I think about this question a fair amount, both in my reading and thinking about social science and in my day job leading an analytics and data science team. Both have taught me a healthy respect for the power of statistics and data to make predictions and decisions, as well as a healthy respect for their fundamental inability to provide the certainty that people crave.

I hoped that laying out my principles might help my readers and followers avoid my mistakes, and prevent a life of crime (against data).

Life is multi-method and truth is conditional

There’s no method that is always appropriate, and there is no right answer. A fun thing you learn in intro methods in grad school is that linear regression represents a correlational relationship between the independent variables and the predictor, not a causal one. The one exception, when correlation is causation, is when your regression has fully captured all the relevant variables and is “correctly specified”1. And that is kind of a joke, of course, because in real-world data it’s an iron-clad guarantee that your data set will never capture all relevant data and won’t be correctly specified.

Simple catch-22 with a deeper lesson: you can’t use statistics to find out the indisputable and underlying truth. Another method, facially appropriate to the situation, may give you different findings. Even if all applicable methods agree for a given data set, the “truth” you’ve uncovered is going to be conditional and subject to change. I studied Congress in graduate school, and the rules by which Congress operates (both literal and figurative) are very different in 2021 than they were in 2021, and the nature and magnitude of quantitative regularities are very different. The same is true in virtually all spheres of human life that are worth studying. If one were to somehow figure out The Truth about a given quantitative relationship, that relationship will change over some scope of time and place.

This is what I’ve taken away from the pithy saying that “all models are false but some are useful”. In most applications of statistics, whether practical or academic, I think it is best conceived as a rigorous test of whether reality is consistent with your assumptions. The methods you use are going to be based on your assumptions, and on what dimensions you care most about consistency.

For the real nerds: choosing a side in the “Frequentist vs. Bayesian” wars is a mistake, both are useful frameworks in different contexts and conditions.

The most important uncertainty is non-statistical in nature

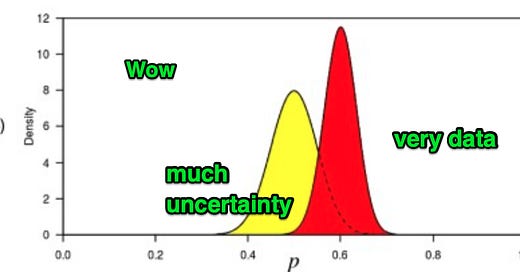

This is a really big one that even experienced practitioners do not take seriously often enough. When you make a quantitative finding, there is statistical uncertainty and there is fundamental uncertainty. Statistical uncertainty is expressed in the standard errors or credible intervals or whatever measurement of uncertainty you prefer. In my day job, we might run a pricing experiment for subscription plans and express that statistical uncertainty with a graph like this:

But the really gnarly uncertainty is fundamental uncertainty, which is best thought of as “what if something changes to make your findings untrue”. What if the preferences of our customers change radically, either via substitution with a new technology or big changes in market conditions? Well then all the nicely calculated statistical uncertainty gets tossed and no longer matters.

Life, as it turns out, is a big series of events that are fundamentally uncertain. This should play a major role in your thinking about decision-making through data.

Context is everything

The data cannot speak for itself, because data does not spring full-grown from the forehead of Zeus. Data from any artificial system, whether social science or hardware or software, is data that emerges from a human context. The data you have is inextricably human, whether or not it is self-reported, human-reported, or machine-reported (e.g., a seismometer or an engine gauge). That inextricably human nature is best captured not just in what is reported, but how it is formatted and what is being measured and recorded.

When making decisions based on quantitative data, it’s almost impossible to make good decisions without all the relevant knowledge of how the data is created. If you’re systematically missing data, or have systematic logic governing how fields are filled, that’s important to take into account. That’s the only way to know the conditions that you need to attach to any quantitative finding.

Get the simple stuff right, checklist where possible

There’s a lot of simple stuff that I get wrong if I’m not careful. Look at scatterplots of your data. Visually inspect tables to see if anything looks off about the data. Check for missing data, and if there is missing data do balance checks to see if that missingness is plausibly random. If you’re trying to do prediction, be very careful appropriately rolling back your data set so that the out-of-sample data will be comparable.2 There’s a lot of stuff like this. If you notice yourself making similar mistakes more than once, build good habits by creating and observing checklists.

Everything else on this list stymies the brilliant statisticians, the best and the brightest, foils the best-laid plans of mice and men through the sheer ineffability of the phenomenons being studied. Treating an ordinal variable like a numeric variable isn’t like that, so don’t f*ck it up.

There are no methodological free lunches

This one emerges organically, I think, from the ones I’ve already laid out: computers cannot substitute for human thinking. Machine learning algorithms can generate highly accurate predictions, and accomplish incredibly hard tasks like generating readable, believable text [MIT Technology Review]. However, the really hard work is in the mental modeling and contextualizing that happens before the machine: understanding the situation and needs, appropriately preparing data, and deciding under what context you can use the output. “Machine learning” isn’t a garbage can into which you can throw any old data and get results that are guaranteed to be applicable for your needs.

There is no free lunch, in terms of avoiding the hard work of understanding a problem. This is why I shake my head at the claims that “data science will fix $social_problem”. You can’t fix a social problem unless you understand it well enough to fix it, and no amount of fancy models will allow you to skip that step.

I.e., your assumptions about the natures of variables’ relationships to each other are all correct. This one is hard to explain.

This statement may make no sense to you, unless you’re a practitioner - I regret to inform you that the only way to really learn this is by getting it wrong and probably in an embarrassing manner.