If you’ve been regularly reading this newsletter you may have picked up on a certain skepticism about the power of machine learning and data science. To summarize it, I generally think the power of machine learning techniques in decision-making is generally overestimated since those techniques are inevitably applied to human-generated data. Often, when applied to automated decision-making, this can reproduce or even reinforce problems of circularity or bias contained in the underlying data.

I think I may have overdone the skepticism so I wanted to lay out a case study for how data science can be applied productively in a social problem-solving or analytical context. Even if all that an ML technique does is “summarize information that is already there” that can be incredibly useful. Specifically, a common problem that faces decision-makers is how to synthesize various different data series or variables in order to prioritize geographies or categories where the most help is needed. Where do we need social workers, or school staff, or police patrols? Thankfully, this falls into a core ML area: dimension reduction.

What We Already (Sorta) Know

There are a lot of social ills in the United States, which are all thoroughly entangled with each other. The reality of structural racism and inequality of opportunity often means that whether you’re looking at educational outcomes, or economic outcomes, or even Covid-19 outcomes, the maps often all look the same [Standard Errors]. That obvious phenomenon suggests that perhaps there might be some way to condense that information down further, to an underlying variable that all these same-looking maps are tapping into. Thankfully, there is a great source of high-resolution data to do so, the excellent “Opportunity Atlas” which I’ve mentioned before [Opportunity Atlas].

We can easily create a dataset of California, with a lot of important social outcomes at the census tract level. We don’t have full coverage of everything we’d like, but we can pull out a bunch of different statistics like household income at age 35, how many kids grew up with two parents, educational attainment, incarceration rates, and a few more covariates which we’d expect to reflect the general conditions of life in the tract. From this dataset, it’s easy to run a regression comparing two outcomes - such as say, whether the incarceration rate predicts local household income:

Incarceration rate predicts income really well. Some theories come naturally to mind - people in jail are not employed, and earn less, and ex-cons have fewer opportunities post-release. Perhaps the mechanism of effect is by household formation - i.e., when a working spouse is incarcerated, you turn one two-income households into two much lower-income households. More worryingly for our inference, perhaps the causality runs the other way: maybe lower-income folks aren’t able to afford criminal defense and end up in jail. But we’re smart, we know correlation is not causation - perhaps the same structural factors that lead to poverty also lead to incarceration.

The concept that we’re dancing around here is the idea of a latent variable [Wikipedia], a property that cannot be directly measured but might have many measurable aspects. Perhaps these superficially different variables - income, incarceration rate, educational attainment, etc - are all different expressions of the same underlying latent variable, which is the overall wellbeing of the population. Some folks might choose to call this “social wellbeing”, whereas a different analytical lens might call it “structural privilege”. But we’re not getting into causation here, only measurement.

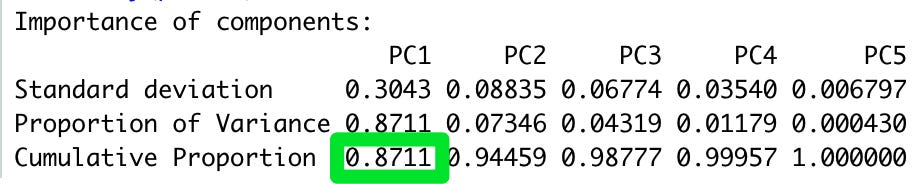

To do this, we can turn to an old technique first popularized in electrical engineering, a so-called “principal component analysis” or PCA for short [Wikipedia]. PCA seeks to take a wide variety of “dimensions” (the social variables mentioned above) and to reduce it to a smaller set of, you guessed it, principal components. The goal of our research is to find a single component which explains as much as possible of a given set of variables: household income, how many people grew up in two-parent households, percent employed, percent incarcerated, educational attainment, and poverty rates. It turns out that these social variables really all do run together: a single index is capable of capturing 87% of the variation in the dataset.

It turns out that this index also correlates reasonably well with other social outcomes of interest not included in the index, such as marriage rates. This suggests that even though our index is not perfectly capturing anything in particular and isn’t predicting anything much at all, it’s doing an effective job of effectively summarizing a bunch of disparate information about a wide variety of social facts and boiling it down to a single number that carries a lot of information. That’s why our totally-made-up and sloppily-constructed “Social Wellbeing Index” can predict marriage at a tract level with an r^2 of .67:

This is literally grade-school stuff by the standards of fancy machine learning, but it is a good example of how powerful simple techniques can be. The application of PCA to social data here isn’t going to “solve” anything, nor does it actually create any new information that was not in your original data set. However, by providing a single index it has provided you a marvelous tool for decision-making and analysis out of a mess of apparently-disparate data. This is a simple application of a common school of techniques called “dimension reduction” that similarly don’t do anything more than summarize what’s already out there. This type of information structuring and pre-processing is incredibly valuable when you’re looking to make assessments for prioritization, or classification, or routing, or really any sort of logistical problem.

Simple but powerful: just like a potato.