How should you read news about polling?

Part one of a two-part series

Greetings from Tel Aviv! I’m out here on a work trip, and blissfully unconnected from the flurry of political news back in the States. With a little distance, I’ve been thinking a lot about polling and how it so often drives a lot of the conversation about politics. With Democratic primary season coming up, I thought it’d be some good service journalism to give some perspective on how a skeptical news consumer should read these headlines. Polling is weird in primary season, and even more so in 2020 - it’s running into a buzz-saw of technological and political change, and I hoped to share some of those changes with you. So what’s happening?

Polling is hard in 2020.

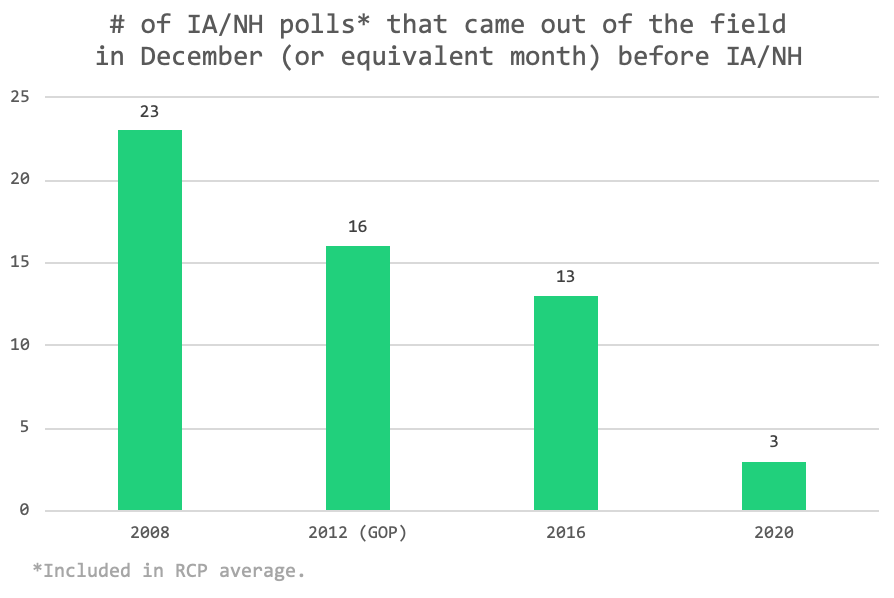

There are far fewer polls than there were in prior cycle. You may have picked up on this even without hard data - it’s easiest to notice because fewer polls mean there are fewer stories about polling and poll results. And that’s definitely true [link]:

So that’s crazy, and messes with how people have come to think about polling. Nate Silver and other polling experts have the constant refrain that the most accurate way to measure the state of the race is to ignore outlier polls and look at the average. Well, with only three polls there’s not going to improve your certainty very much - every poll is an outlier!

The culprit for this is the skyrocketing cost of telephone polls, which are driven by lower rates of people called who complete the survey (“response rates”). Traditionally telephone polls are done by random dialing of phone numbers - either literally randomly dialing digits or dialing a randomly selected list of possible/likely voters. Polling houses need human beings to call those numbers - and the lower response rates are, the more person-hours you need to spend to get a person on the phone. The well-respected polling house Pew found response rates dropped from 9% to 6% just between 2016 and 2018 [link].

Response rates have been falling for a long time, but it’s gotten worse recently. In the 90s, response rates could be as high as 1 in 3, meaning 1 in 3 people you dial would not only answer but would answer your survey questions. Now it’s 1 in 17. So this has meant that polling has gotten a lot more expensive, as you need to call that many more people.

It also means we start to get concerned about potential ~bias~ in the polls. This doesn’t mean that someone is fixing the polls - instead it means that the people we’re reaching are less and less likely to represent the population as whole. Intuitively it makes sense - you don’t answer unknown numbers calling your phone because only a weirdo would do that. Well, the more that phone spam proliferates and the lower response rates, the fewer normies answer their phone and the more we get concerned about bias.

Well sh*t. Are there other options?

There are internet polls! Internet polling is quite different than phone polling. While phone polls reach out into the universe to go find respondents, internet polls generally attempt to reach people where they are. This sometimes means running surveys in little pop-ups on websites, or using so-called “panels”. Big internet survey firms like YouGov or Survey Monkey have large lists of people signed up to be eligible for surveys, and they’ll select some of them to answer depending on the questions asked. For a political survey it may be registered voters

This is a fundamentally different approach to polling than traditional phone polls. In statistical terms, phone polls are called a probability sample - they take the whole universe and try to get a random sample. Internet polls are nonprobability samples - it’s what is called a convenience sample. There are statistical methods that we can use to try to correct for this, such as reweighting the population to try to look like what we think the electorate “should” look like. These are tricky and it’s hard to tell whether they actually work.

It gets worse, though - because it’s pretty hard to tell what’s actually right. A well-known phenomenon in survey research is what’s called a “mode effect”, which is when people’s answers differ very systematically depending on how you ask. A robo-poll may have different answers than a poll with a live interviewer AND than a written internet poll. Some people think this is because people are hesitant to give socially unacceptable answers to a real human on the phone. This is called the “Bradley Effect” or the “Shy Tory” effect and may or may not be real.

Can you sum this up?

Polling is bewildering right now. You should look at the average of polls rather than just one or two. But because no one answers the phone, there aren’t enough polls that this really works. And even worse, they might all have the same problems with reaching the right people. You can do things to correct for this, but the problem has gotten so severe so quickly that nobody really knows yet if they work.

The only thing saving us is that polling right now doesn’t matter.

And that’s the subject of Part 2 next week.

Further reading:

Phone polling in crisis again [link]

How online polling breaks historical data [link]

The American Association of Public Opinion Research report on online polling [WARNING - BORING]

The persistent myth of the Bradley Effect [link]