Don't Read Election Forecasts

What if weather forecasts steered the hurricane?

I love political statistics. As a recovering political scientist and briefly-professional election-botherer, it’s both a job and a passion. When someone asks what I think of Nate Silver, I respond with a hearty and enthusiastic, “He’s fine.” More seriously, I deeply appreciate everything he and his quant-y peers (such as Nate Cohn at the NYT Upshot) have done to bring the concepts of political science to the mass public. So I was fascinated to read about this recent Journal of Politics paper by Westwood, Messing, and Lelkes [link] which claims that election forecasts makes voters, particularly Democrats, overly complacent and hurts turnout.

Long story short: this paper is pretty convincing that election forecasts are confusing and appear to discourage voting. I’d suggest that you keep your consumption of these forecasts to a minimum. While I’d also generally recommend keeping consumption of all political news to a minimum, if you’ve read this far you probably have the opposite problems. If you must, polling averages like the 538 average [538] are going to keep you much more informed about where the race stands today.

What the research says

A big part of why I think this paper is so confusing is the sheer breadth and depth of the data that is brought to bear. Most social science papers these days are essentially “single analysis” papers, which is to say they’ll run a single experiment or put together a data set for regression analysis. Most of the paper is taken up doing sanity checks and pressure tests on that single analysis. This one is a bit different - it’s a mixed methods data that uses both “macro” and “micro” data, encompassing a mix of survey experiments and observational historical data.

So the key reason forecasts are interesting (“the motivation” as fancy academic say) is that voters care less about elections that are “in the bag”. This in turn makes them less likely to vote. This observation is well-founded in theory, historical data, and common sense: voting can be a pain and voters are less likely to drag themself off the couch if their favored candidate is cruising to a sure-thing victory (or defeat). This is true regardless of whether people are drawing up forecasts, so it shouldn’t matter.

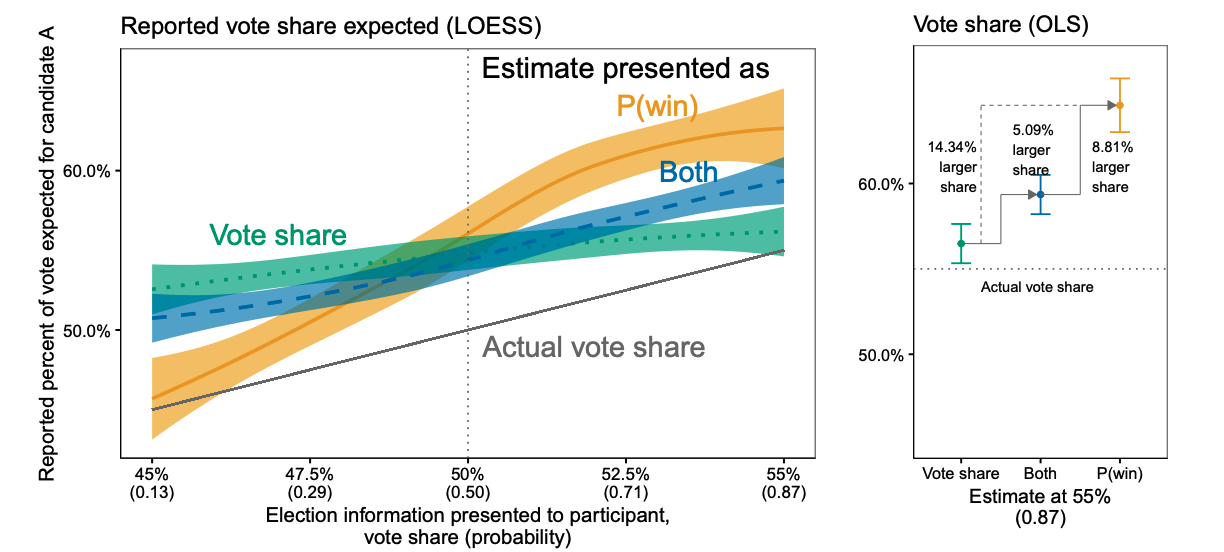

Except there is the also well-known insight that people are bad at understanding probability. This is also the case in electoral forecasts that are expressed in terms of probability, where voters are likely to read “a 75% chance of victory for Candidate X” as “Candidate X has it in the bag”. They can also just mistake a probability forecast as an expectation for the percentage of votes a candidate will get, as shown by an experiment where the same results are presented either as “win probability” vs. “poll averages” or both. The effects are pretty noticeable - it appears that when test subjects are told that a candidate has a 60% chance of winning they tend to make both errors:

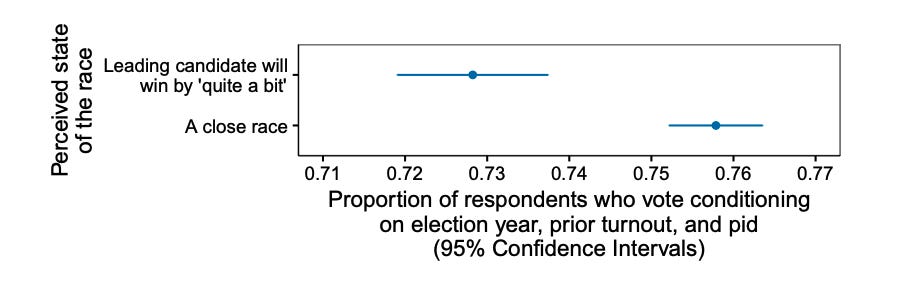

There are a lot of cool extensions in here. They turn the same setup into a game, where participants are asked to make bets either using “expected win probability” vs. “expected vote share”. The people in the probability group were, as expected, routinely too overconfident. They also use an error in the 538 forecasts during the 2018 Congressional elections to show that betting markets wildly overreacted to changes in win probability. And this overconfidence has costs - overconfident voters were substantially less likely (2.5 percentage points) to vote:

The Upshot

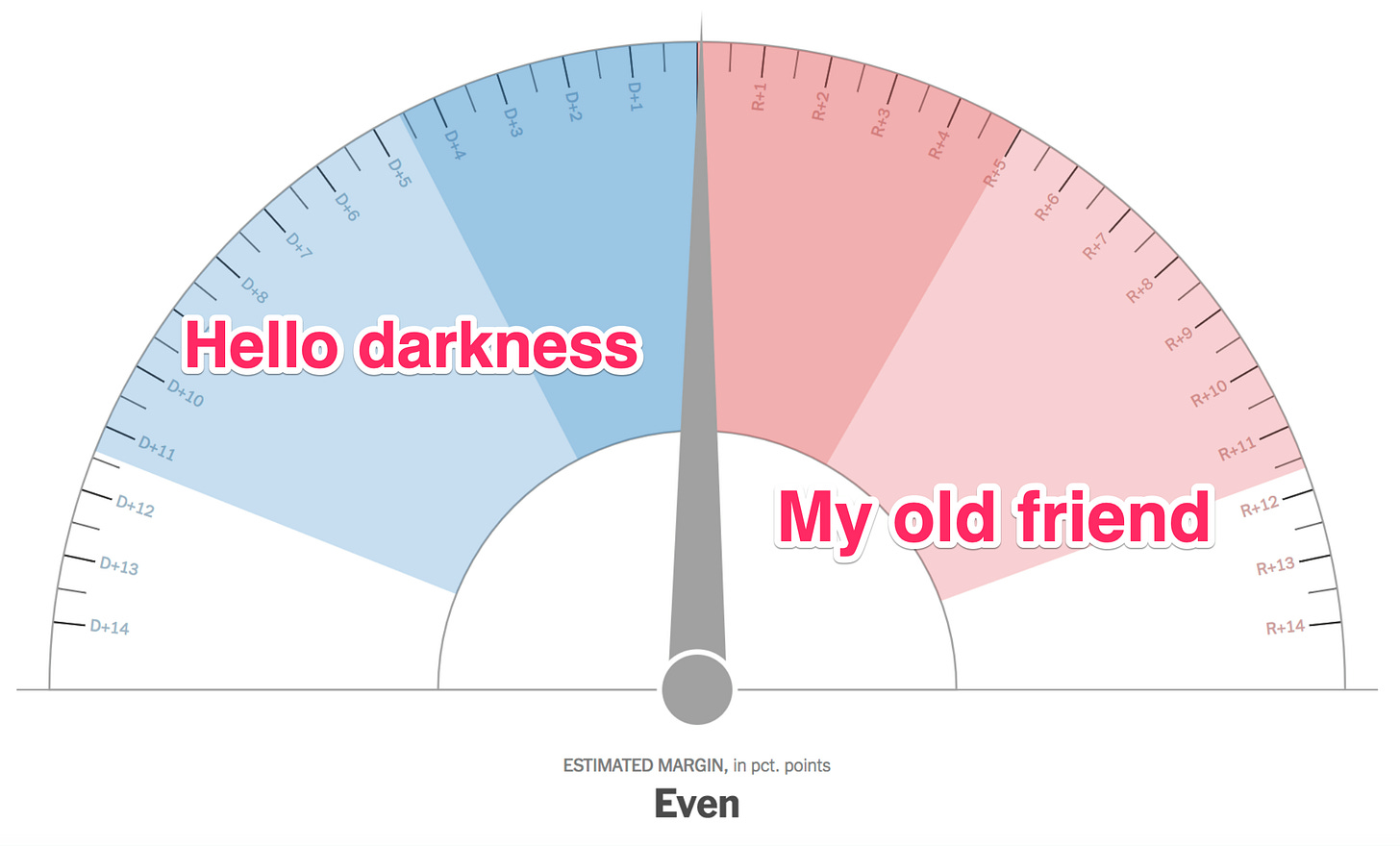

These types of probability forecasts have become very popular in political media - but not uniformly. FiveThirtyEight is well-known for their forecast, and the infamous “needle” from the New York Times’ The Upshot [NYT] is burned in the minds of many political junkies.

In fact, probabilistic election forecasting has propagated primarily across left-leaning media, with much less presence in right-leaning media. There are not prominent websites with right-leaning audiences that maintain probabilistic forecasts - the main right-leaning site with poll aggregation, RealClearPolitics, only does poll averaging [RCP]. As the authors show, these forecasts are mentioned much more on news channels catering to left-leaning audiences. In short, if you’re liberal and keeping politically informed you’re just getting flooded with confusing and misleading messaging about the state of the election!

I think it’s best to not pay much attention to the forecasts. Even if you’re a statistician it’s hard to fully internalize the information being imparted, and looking closely at these forecasts is likely to breed complacency about the state of the race. I think it’s also worth asking yourself if you really, truly understand what it means to assign a probability to a one-time event that is taking place under truly unique circumstances. I certainly don’t, and as a result have to recommend behaving as though the forecasts don’t exist. There are a lot more productive ways to deal with election anxiety, like donations and volunteer shifts for candidates you support that are running tough races.

And keep in mind that no matter what probabilities and forecasts say today, it’s always a decent bet that by tomorrow everything will change.